I use Extended Events almost exclusively for capturing query metrics. They are the most consistent and lowest cost mechanism for getting the time and resources used by a query. They can be filtered, combined with other events, they’re just marvelous… until you capture an execution plan.

Execution Plans in Extended Events

Don’t get me wrong. Capturing execution plans with Extended Events is the way to go if you’re attempting to automate the process of capturing plans on specific queries on an active system. It’s step two that bugs me. So, we capture the plan. Here’s an example script. Captures all the various plans and the batch, puts ’em together using causality tracking:

CREATE EVENT SESSION ExecutionPlansOnAdventureWorks2014

ON SERVER

ADD EVENT sqlserver.query_post_compilation_showplan

(WHERE ( sqlserver.database_name = N'AdventureWorks2014')),

ADD EVENT sqlserver.query_post_execution_showplan

(WHERE ( sqlserver.database_name = N'AdventureWorks2014')),

ADD EVENT sqlserver.query_pre_execution_showplan

(WHERE ( sqlserver.database_name = N'AdventureWorks2014')),

ADD EVENT sqlserver.sql_batch_completed

(WHERE ( sqlserver.database_name = N'AdventureWorks2014'))

ADD TARGET package0.event_file

(SET filename = N'C:\PerfData\ExecutionPlansOnAdventureWorks2014.xel')

WITH (MAX_MEMORY = 4096KB,

EVENT_RETENTION_MODE = ALLOW_SINGLE_EVENT_LOSS,

MAX_DISPATCH_LATENCY = 30 SECONDS,

MAX_EVENT_SIZE = 0KB,

MEMORY_PARTITION_MODE = NONE,

TRACK_CAUSALITY = ON,

STARTUP_STATE = OFF);

GO

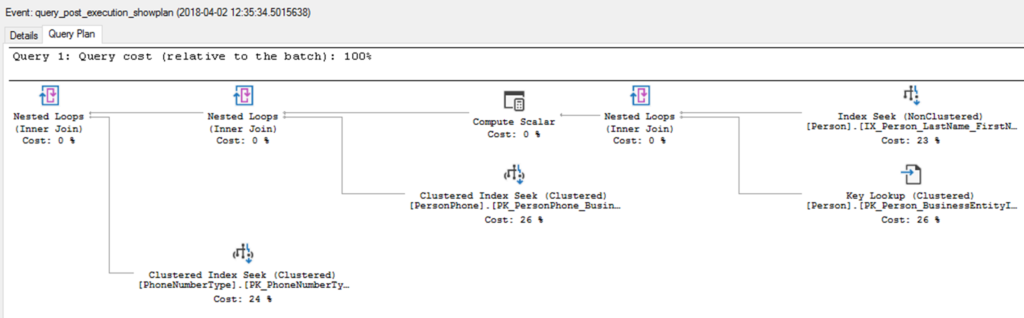

Cool beans. Does what I want, when I want, where I want. Excellent. Here is a captured plan shown graphically in SSMS:

Notice anything missing? Yeah, the first operator, the SELECT operator (technically, not really an operator, but they don’t have any name or designation in the official documentation, so I’m calling them operators). It’s not there. Why do I care?

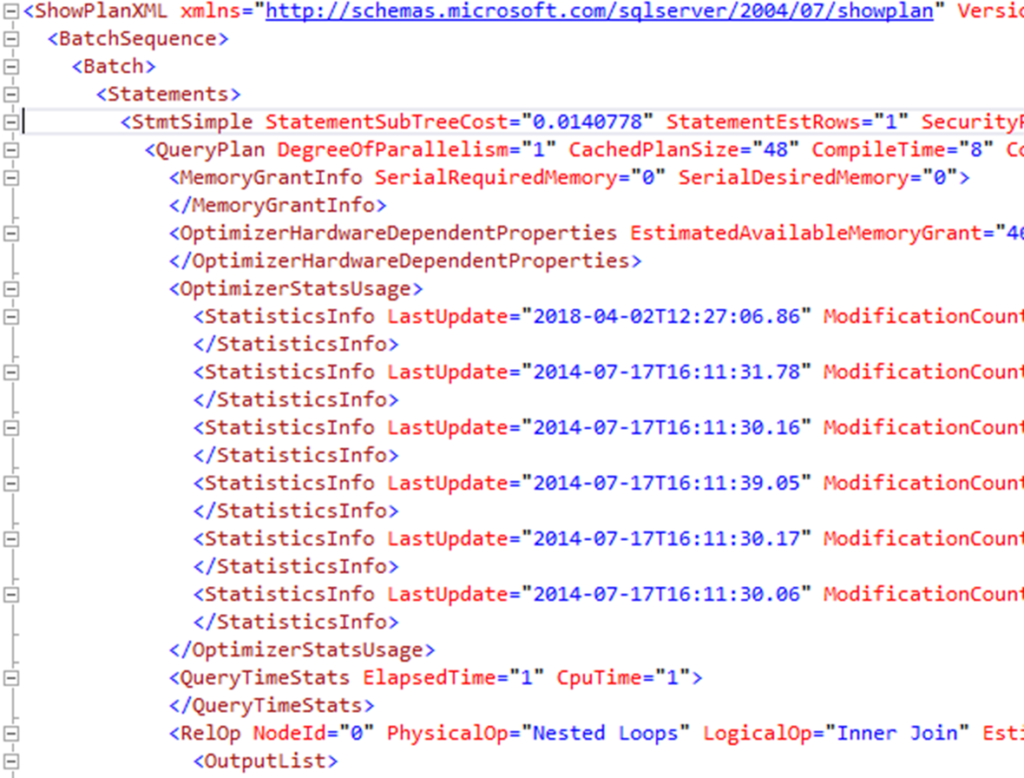

Because it’s where all the information about the plan itself is stored. Stuff like, Cached Plan Size, Compile Time, Optimizer Statistics Usage, Reason for Early Termination, is all there, properties and details about the plan itself. Now, the weird thing is, if you look to the XML, as shown here, all that data is available:

What’s going on, as near as I can guess, is that the XML captured by Extended Events is ever so slightly different and it just can’t display that first operator correctly. You can see it for yourself. Capture a plan using Extended Events and one using SMSS (or Query Store, or the DMVs, they all behave the same way), then look at the XML for each plan. They are a little different. Just enough to change how the plan displays within the graphical viewer. Odd stuff.

Conclusion

This is a pain. It’s not an end of the world scenario, just a pain. I’d prefer to not have to dig into the XML when I capture a plan with Extended Events, but, for the moment, you do. Hopefully we’ll see this changed with a new cumulative update sooner rather than later. In the mean time, you know where to look for that information, the XML.

One point worth noting, capturing execution plans through Extended Events is expensive (much cheaper than Trace Events, but still expensive). Be judicious in the use of this functionality.

If you’re not yet using Extended Events, or, you want to know more about how to use them, and a whole bunch of other tooling in SQL Server as part of query tuning, then come and spend the day with me. I’m taking this show on the road:

For SQLSaturday Philadelphia on April 20, 2018. Please sign up here.

For SQLSaturday NYC on May 18, 2018. Go here to register.

For SQLSaturday Indianapolis (and my first time speaking in Indiana) on August 10, 2018. Please go here to sign up.

For SQLSaturday Oslo on August 31, 2018. Click here right now to register.

Grant, Very interesting stuff there. I just found this site, which is great, and I’m wondering if you would entertain a tricky TSQL coding challenge? It would make a great video for SQL developers, for sure, as it has several dimensions to it.

The problem is this – you have two tables, one named SPECS with 500 rows of data in 12 columns, the other is named DELS and has about 7,000 rows of location information in 14 columns, for multiple deliveries.

I need to distribute some of the SPECS data, into certain strings, >conditionally<, to the DELS rows where the "DelCode" value in that Column/Row matches one or more of the "SpecCodes" values within a comma-delimited string in that Column/Row in SPECS, that can have from 1 to as many as 15 values. Each match to the single "DelCode" value will yield an Output Row, but many of the SPECS Rows will have a match to a number of DELS Rows , so the Output is frequent.

Additionally, the Output has to go to a third table (an exported dataset really), and has some other columns to include – a few that repeat the same parameter value for every row of Output, and a few that increment by one or change a date to two days later for each Output Row, or concatenate a declared variable value (that changes based on another value in the same SPECS row) with one of the 'boilerplate' type DELS strings – that just had some new data (a substring) inserted via a REPLACE. Depending on the variables and dates, all three of the above could occur in one Output Row. Yep, it's rather complicated.

I figured out how to make this work in Excel VBA, with the SPECS and DELS data in sheets, and the desired Output rows in a third sheet, formed using various Excel sheet formulas and VBA to do looping, functions, and other things.

But it takes VBA 20 minutes to create the 35,000 Rows of Output – because for every row in SPECS, it has to Output a new Row combining all of the above, based on one or more matches in DELS Rows, to each SPECS Row's multiple values in the string.

In other words, one row of SPECS may get a match on a certain column's value in 378 of the 2,000 DELS rows – ergo 378 new Output Rows needed. The next Row of SPECS might match to only two rows, or maybe none – it's like a crosstab where the Output total is always in the 25,000 to 40,000 Rows per week, for every run.

There is no pattern – it's just data with tags and various codes that either match, or don't. So 20 SPECS Rows might result in 3,000 Output Rows, but the next 50 SPECS rows might result in just 35 or 52 Output Rows, or whatever. So you have to find a match, then feed strings into other strings, etc. – then paste into an 18-Column Output row. It can easily be 200,000 or more data values that are conditionally copied, inserted, or concatenated.

I'm thinking there's gotta be a way to use TSQL Window Functions to do this in a much faster way, but can't find the approach that works. I've done many complicated SQL queries for years with strings, formulas, and cascading CASE statements, all sorts of concatenations and whatnot, but this is a stumper for me. I've worked with Access, SQL, and MySQL, as well as queries against huge millions-of-rows DB2 tables, and have Mgmt Studio and SS 2017 edition, but I'm stuck on the right code approach with Windowing, for fast execution. Outputs can be in TempDB or held in memory, and at the end it just dumps the 30,000 or more Output rows to an Excel or csv file / done.

Can you help, or post this as a 'TSQL challenge' on your site? I'd be happy to construct and send an Excel file with real-world data that, but this is a large client, so have to 'dummy-data' the example. I can send that in a day or two, so please let me know,

Thanks a bunch, Bill in Dallas.

I’m not ignoring you. This is just a bit dense and I haven’t had time to unpack it all yet. In the mean time, you might want to go to SQL Server Central and see what kind of answers you can get by posting this as a question to the forums there.

[…] Grant Fritchey notices something odd about execution plans grabbed from an Extended Events session: […]

[…] SQL Server only has one kind of execution plan (more on that coming very soon). However, due to a bug in both Extended Events and Trace Events, the plans captured there, while identical in every other way to every other way […]